A practical and targeted Docker guide: from zero to a working Docker container in an EC2 instance

This blog-post describes the steps for packaging a C# .NET Core API service into a Docker image; focusing on how to run a container using the various options that Docker offers, especially interacting with external resources.

I won’t dwell on Docker technology and its benefits, there are some great blog posts for that, here is one of them. I’ll start from the very beginning: a plain Linux-based EC2 instance.

TL;DR

What’s on the menu?

- Installing Docker on a Linux-based EC2 instance

- Packaging the application and uploading it into our instance

- Creating a Docker image from the published .NET API binaries

- Essential Docker commands for running and managing Docker images and containers

- Setting the service’s port and managing the container’s storage

- …and more useful Docker commands

What Is Needed Before Embarking on the Journey?

The first building block is a working EC2 instance, which has SSH access from the web (port 22). For an easy start, you can follow my blog post “Setting a Linux-based EC2 Instance ”.

The next step is installing Docker on an EC2 host. Skip to Part 2 if you already have an instance with Docker on it.

Part 1: Installing Docker on a Linux Server

If you launched an EC2 AMI that was not pre-installed with Docker, then it must be done manually. The easiest way to check whether Docker is installed or not is typing the command docker info. The output displays information about Docker in case it is installed, otherwise, the system will prompt that Docker is an unknown command.

There are differences between the various Linux OSs that affect the steps to install Docker. I’ll focus on two Linux flavors: Debian and Fedora. Debian is represented by ubuntu whilst Amazon-Linux is based on Fedora. Running the command below presents more details about your Linux machine:

$ cat /etc/os-release

For the purpose of installing Docker, the main difference is which installation tool to use: apt or yum. More differences can be found below:

Debian

------

# Package format: *.deb files

# CLI tool for downloading: apt-get

# Custom config files: /etc/apt/sources.list.d/

# Default user login: ec2-userFedora

------

# Package format: *.rpm files

# CLI tool for downloading: yum and its successor dnf

# Custom config files: /etc/yum.repos.d/

# Default user login: ubuntu

Installing Docker

The relevant installation utility for Amazon-Linux EC2 is yum, while the installation manager for Ubuntu is apt. I’ll start with yum. Firstly, we need to ensure the current packages are updated, so run the following command:

# This command attempts to update all the installed packages

$ sudo yum update -y

The next step is to install the recent Docker Community Edition (CE) package (run with elevated permissions using sudo) and starting its service afterwards:

# Install docker

$ sudo yum install -y docker-ce# Start Docker service

$ sudo service docker start

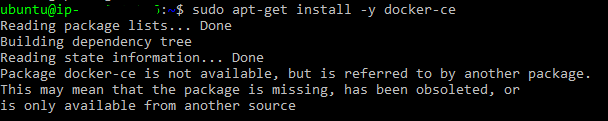

Now, let’s address Ubuntu. In case you launched an Ubuntu machine then downloading Docker is required, otherwise apt-get doesn’t recognize it and prompts the following error:

Follow the commands below to rectify the problem:

# Add the GPG key for the official Docker repository:

$ curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo apt-key add -# Add the Docker repository to APT sources

$ sudo add-apt-repository "deb [arch=amd64] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable"# Update the package database after adding the docker

$ sudo apt-get update# Install Docker CE:

$ sudo apt-get install -y docker-ce

In case of problems, you may want to follow the official installation guide on the Docker website.

Cool. Now you should have a Docker service installed and ready for action.

Wrapping-Up the Installation

Since the logged-in user is ec2-user (or ubuntu if you’re using an Ubuntu-based instance), for convenience purposes, let’s add this user to a Docker group to avoid using sudo to run any Docker associated commands:

$ sudo usermod -a -G docker ec2-user

To apply this permission, you need to re-login to the server, so exit the SSH connection and reconnect again. You can verify the Docker service is up and running without using sudo:

$ docker info

To summarize this part, the required commands for setting Docker can be consolidated in the “user data” script before launching a new Amazon-Linux-based EC2. It will ensure your newly created instance has the latest Docker version.

#! /bin/bash -ex

sudo yum update -y

sudo yum install -y docker

sudo service docker start

sudo usermod -a -G docker ec2-user

Configure Docker to Run Automatically After Boot

To check whether Docker is configured to run after boot or not, run the command systemctl status dockerthat displays Docker’s configuration. If Docker is disabled you need to run systemctl enable dockerto enable it.

Part 2: Building a .NET Core API Application

To demonstrate this part, I wrote a simple C# .NET Core API service that will be used to exemplify Docker features. The source code is available in for you in GitHub (can be found here). You can clone and build it locally:

git clone https://github.com/liorksh/ManageTeams <destination-folder>cd <destination-folder>dotnet restore

dotnet build

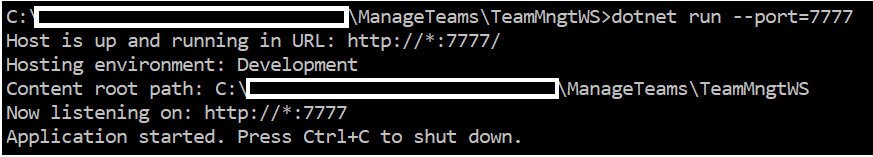

Once built, you can run it and specify the listening port (unless you want to rely on the default port):

dotnet run --port 7777

The expected result is:

This API service receives optional parameters that will be used later on: listening port (--port ) and Log file path (--logpath ). You can refer to the ReadMe file for more details about this service.

Last Preparations Before Dockerizing the Service

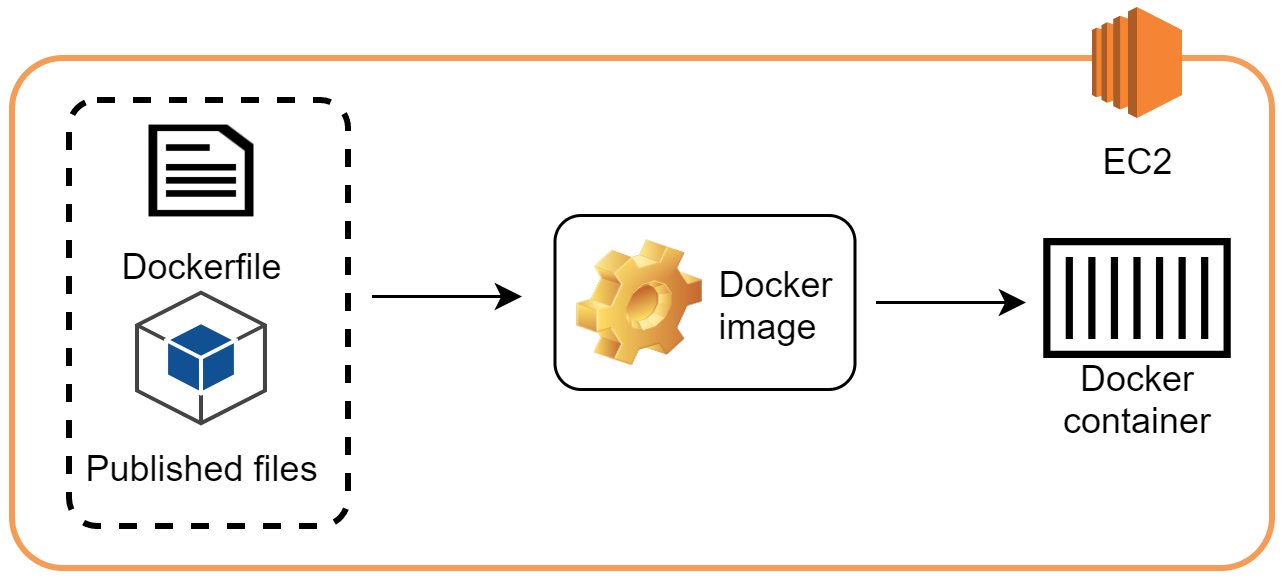

There are two more things to prepare before building a Docker image in our EC2 instance: package the application and create a Dockerfile.

Packing the application and its dependencies are done with the command dotnet publish that copies the required binaries and all their dependencies into a folder that eventually will be copied into a hosting system. After running dotnet publish under the C# project folder, by default, the output is saved in “bin\Debug\netcoreapp2.0\publish”, but it can be changed by setting a parameter to the publish command dotnet publish -o <folder-path>.

The next task is defining a docker file (Dockerfile, capital D without the file extension). It is essential for creating a Docker image from our binary files. The Dockerfile includes the recipe for the Docker engine to build the image. Our Dockerfile is basic, but it contains the necessary components for building an image. It is composed of:

- The working environment (aspnetcore 2.0 in our example).

- The container working directory (app).

- Where to copy the binaries from (the publish folder) and their new destination (local folder in the image itself).

- The entry point of the application (TeamMngtWS.dll in this example).

FROM microsoft/aspnetcore:2.0WORKDIR /appCOPY ./publish .ENTRYPOINT ["dotnet", "TeamMngtWS.dll"]

In order to build the image successfully, the publish folder should be accessible and located at the same folder as the Dockerfile, and thus the path is ./publish ..

Great. Now we’re ready for the next phase — uploading the necessary files onto the Linux server👌.

Part 3: Moving Into the Cloud

In this example, I launched an Ubuntu-based EC2 instance. Under the ubuntu user, I created a local folder to host my application (the myapp directory).

Finally, we’re ready to copy the publish folder and the Dockerfile into the server. The simplest way to copy the files is by using the scp utility (initials for Secure Copy), you can opt for using an application like FileZilla, if you are fond of UI.

The command below copies the essential files (binaries and configuration files) from our local computer to the EC2 instance, so don’t forget to open port 22 in the security group that is associated with your EC2 instance.

scp -i <pem file path> -r <folder/file to upload> <Linux-user>@<ip address>:<destination folder># Example

scp -i /temp/my-key.pem -r /temp/my-application ubuntu@1.1.1.1:~/myapp/

Building the Application

After copying both the publish folder and the Dockerfile, we’re ready to build a Docker image, which is the fundamental unit of our packaged service. The build command receives the Dockerfile path as an argument and then creates an image based on its content.

docker build <docker-file-location> -t <image-name># Example

$ docker build . -t myteams

Part 4: Ready? Run!

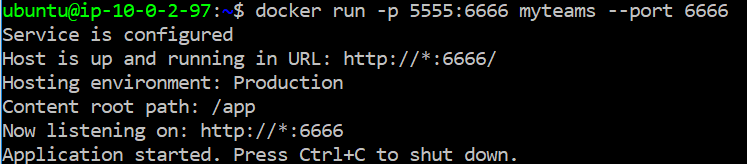

Our API service listens to a certain port to interact with external clients. As opposed to other applications that are hosted on the server, our service is running behind Docker, so in fact Docker interacts with external clients directly rather than our application. We need to pass the internal port to the container:

# Running the image with publishing port (internal:external)

docker run -p <external-port>:<internal-port> <image-name> [IMAGE Arguments]# example

$ docker run -p 5555:6666 myteams --port 6666

To sum up the ports part at the example above, our API service is hosted in a container that is accessed externally via port 5555. Internally, the service host is exposing port 6666, which is translated to port 5555 externally. This transition is transparent to the API service.

Accessing the API Service

Once the container is up and running it can be accessed internally from within the server:

# calling the About method

$ curl http://localhost:5555/About

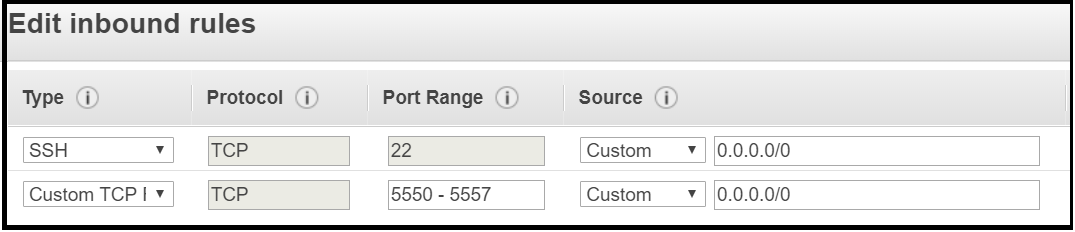

The API service also can be accessed externally from the web, after allowing the incoming traffic at the security group of the EC2 instance. I opened a range of ports to allow traffic for more than one container:

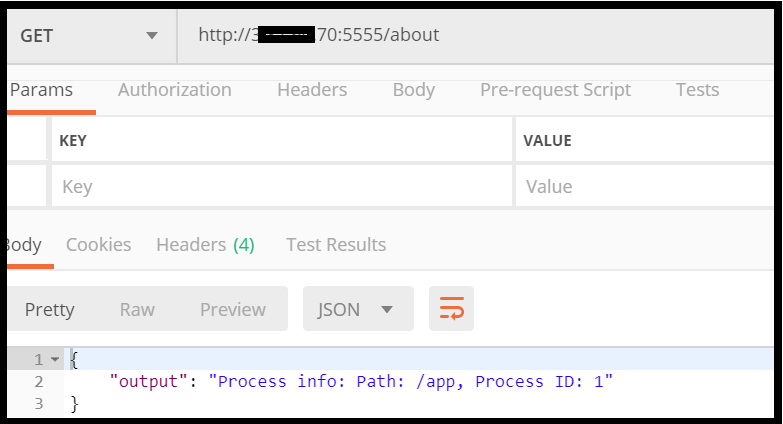

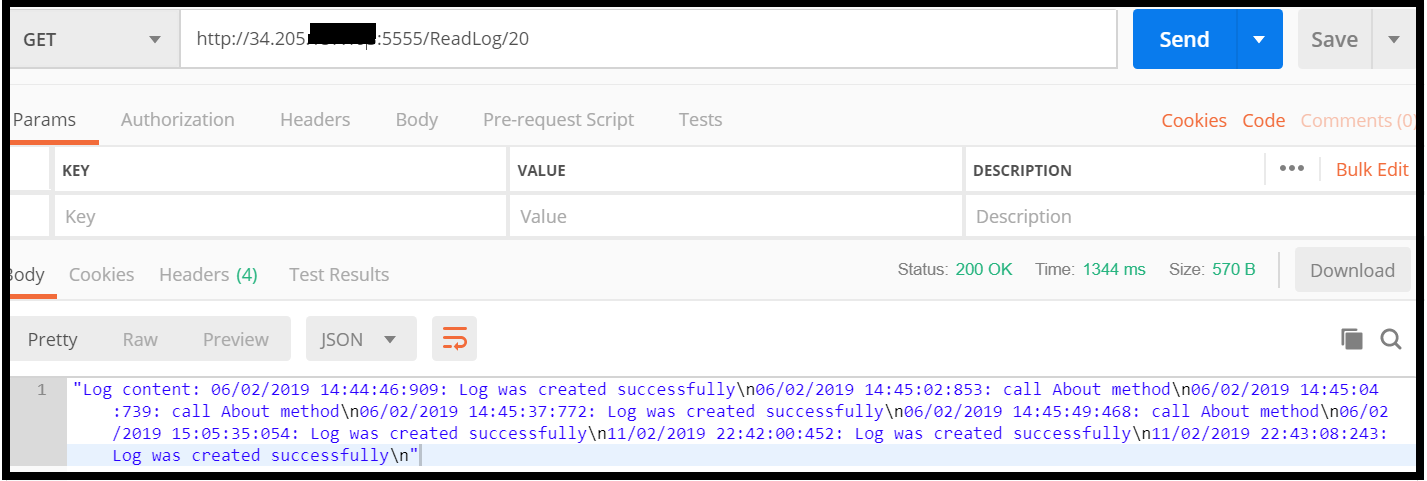

I used Postman application to access the API service remotely, the expected result is:

That’s a big step forward 👏. Now we’re ready to dive into Docker.

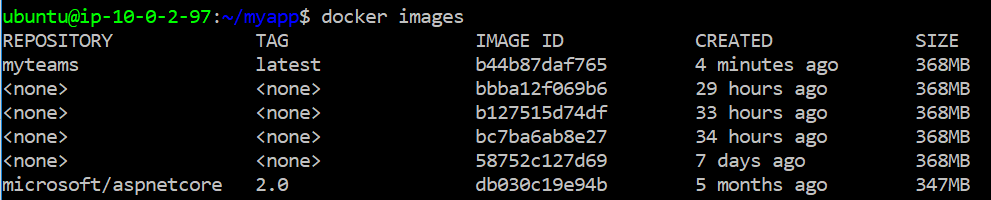

Managing the Container Lifecycle

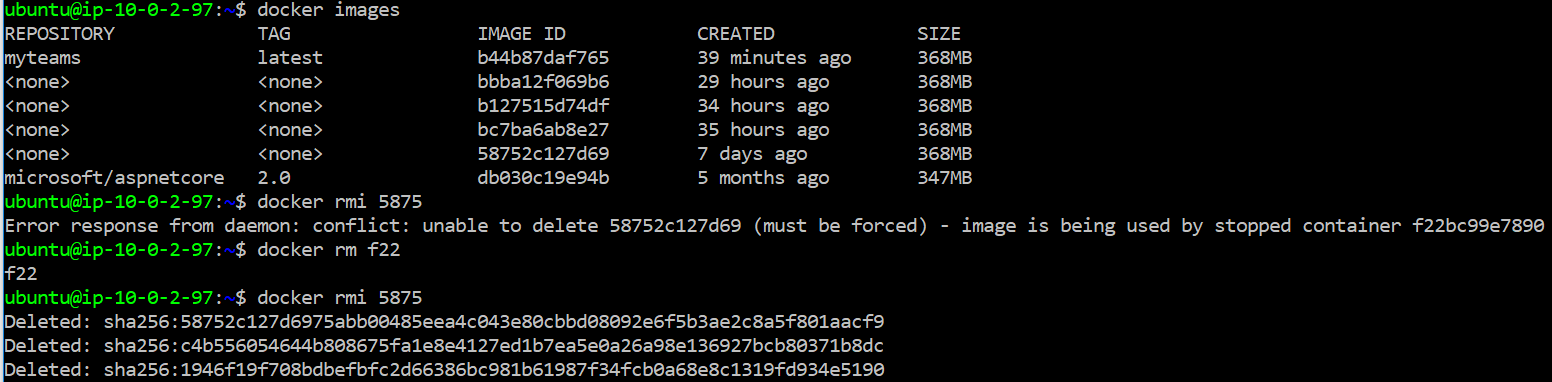

So, we have seen how to create an image and run a container, which is a good start. Let’s continue by reviewing some basic commands. The first is docker images command, which displays all images:

To list all images, including the hidden ones, run docker images -a.

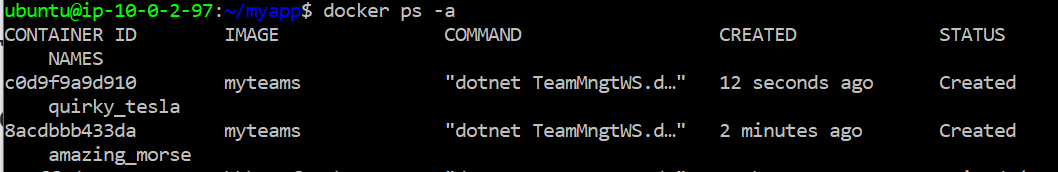

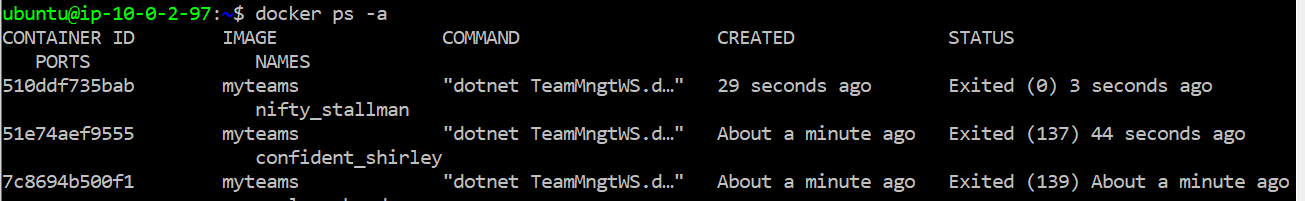

The command docker psdisplays only the running container whilst the command docker ps -adisplays all the containers (exited and created). Showing only the exited containers is done by running docker ps -l .

The command docker run , which was mentioned earlier, in fact, executes two commands together: docker create and docker start.

A new container is created after the execution of docker create. Don’t forget to run this command with the relevant parameters for the container you’re creating.

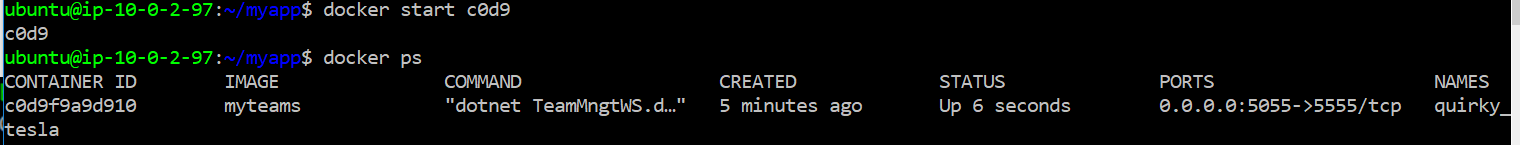

After creating the container, you can execute docker startfollowed by its name or id (at least the first characters of the id). The container is created with the parameters given in the docker create command. In fact, you can use the start command not only to start newly created containers but also to start an older version of containers.

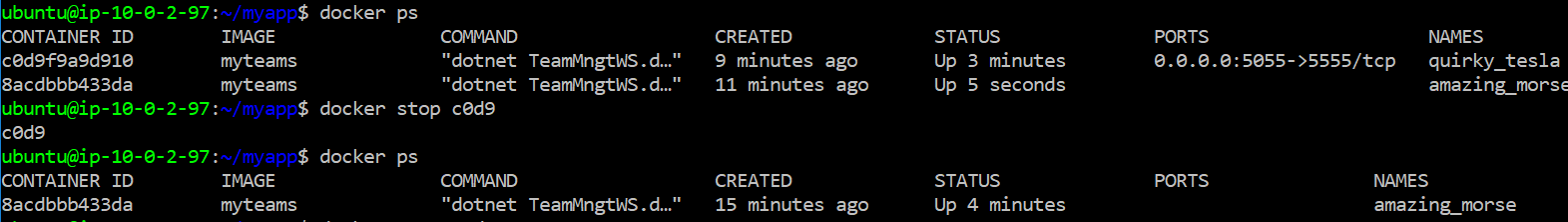

Stopping a container while it is running is straight forward: docker stop <container-id>:

There is an abrupt way to stop a container:docker kill <container-id>, which is not a graceful shutdown, as opposed to the stop command. After running the kill command the exit code of the container is a non-zero value.

Removing Images/Containers

If you deem an old version of a container is no longer required, you can remove it. The command is docker rm <container-id>. This container will not be displayed anymore.

Similarly, removing an image is done by running docker rmi <image-id>. Sometimes, Docker prompts an image that has constraints or dependencies and thus it cannot be deleted. In this case, you can either remove these constraints prior to the deletion or use the flag -f to force the deletion. The deletion of an images removes its layers, therefore you’ll most likely see more than one deleted item. The scenario below demonstrates a deletion after solving the image’s dependency.

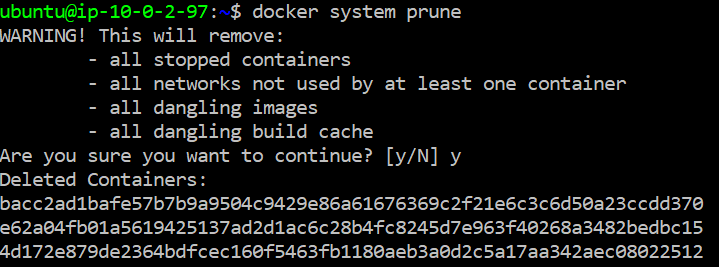

There is another way to remove containers and images by running the command docker system prune . However, this command is more extensive since it removes all the stopped containers and dangling images at one shot. It is useful command for housekeeping:

Diving Into the Run Command

The docker run command is a central one. It receives many optional arguments that set the way containers are loaded, run and exited. You can work more efficiently by knowing this command’s various options. Let’s review some beneficial arguments:

# Run command format

$ docker run <image-name> [IMAGE Arguments]# Assigning a name to the container (instead of generated name)

--name <container-name># Exposing the container external IP

-p <external-ip>:<internal-ip>

--publish <external-ip>:<internal-ip># Attaching a volume and map it to the container

--mount source=<volume-name>,target=<mapped-drive># Removing the container immediately once it is exited/stopped/killed.

#if you're using this flag, it means you can't start the container afterwards, since it will be deleted

--rm # Starting the container without hooking the CLI to the container

--detach# Run interactively (-i)and display the terminal (-t)

-it# Setting environment variables

--env KEY=VALUE

Part 5: Let’s play! Sharing a Container’s Storage and Other Useful Commands

Now that you know how to manage a container’s lifecycle, let’s see how you can utilize more by accessing the containers storage.

The container’s storage is ephemeral. It will be gone after the container has been removed. Wisely, Docker supports the creation of managed volumes that can be used as persistent storage.

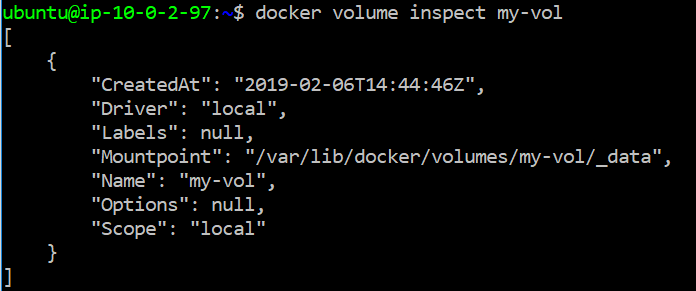

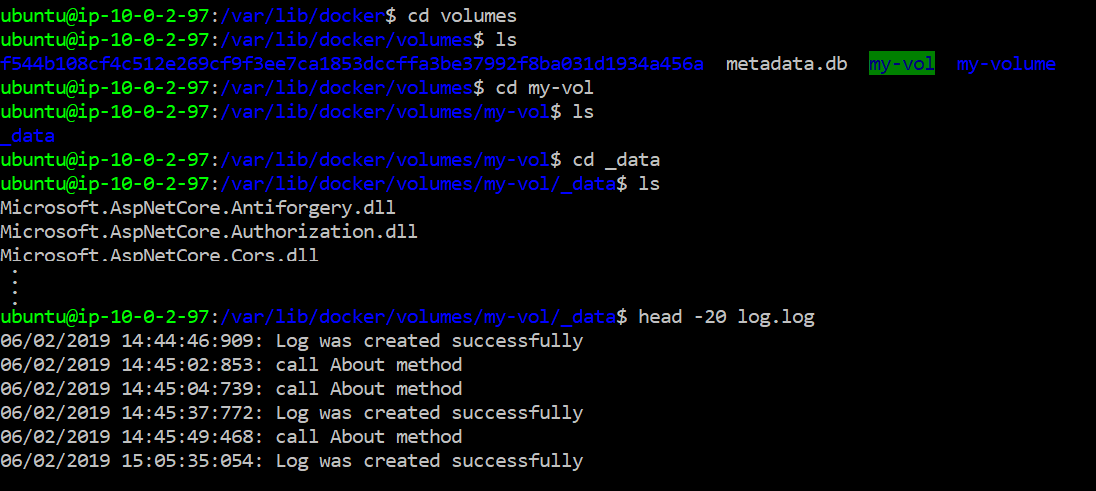

The basic command to view the existing volumes is docker volume ls. In order to demonstrate how a volume is not tightly coupled with a specific container, we shall create a new volume, named my-vol, by running the command docker volume create my-vol.

Our API service writes a log file locally, relative to the folder in which the application runs. The log file’s path is provided as an argument for the service (logpath). In order to mount the container’s local folder with a volume we’re using --mountparameter. The command below mounts an existing volume to a container and sets the log file’s path to the local folder:

$ docker run -p 5555:62222

--name team

--detach

--rm

--mount source=my-vol,target=/app

myteams --port 62222 --logpath=/app

# Note: the last two parameters are relevant to the API service

The container above is deleted automatically after it is stopped or killed (--rm). It allows using the same name for the container at each iteration.

I ran this command several times. As a result, the log file accumulated the messages logged by each new container. It is demonstrated upon calling to ReadLog method that prints the log messages. The log file persists its content on the volume. Trying to run the same command without the mount will yield the opposite: the log file will be created on the container’s ephemeral storage and thus will not accumulate the log messages.

One more useful tip: you can explore the existing volumes by running docker volume inspect <volume-name> :

The folders under docker directory are accessible only to authorized users, nevertheless, it can be changed. You can grant the relevant permissions and permeate them until reaching the directory that was mounted to your container. In this example it is my-vol. With that, your container’s files are accessible regardless of the container’s state. That breaks the container’s isolation and gives you more flexibility in designing and implementing you solutions.

For further reading about Docker volumes, refer to https://docs.docker.com/storage/volumes/.

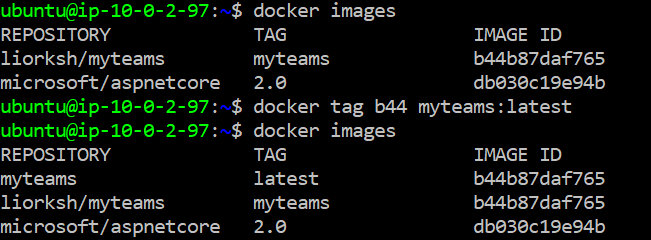

Tagging Image and Creating an Image From a Container

Before wrapping up, I’d like to quickly cover two commands that I consider useful for managing images: tag and commit.

The tag command allows the creation of a new image based on an existing one. It points an image and allows you to refer it separately.

docker tag <image-id> <image-name>

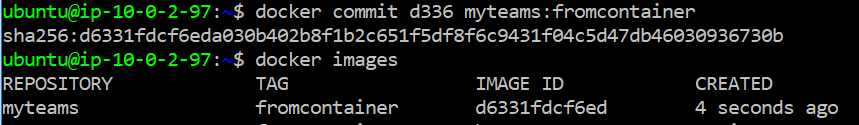

The second command is commit. Docker enables the creation of images from an existing container. That means, for example, the local storage of the container becomes the local storage of the new image.

docker commit <container-id> <new-image-name>

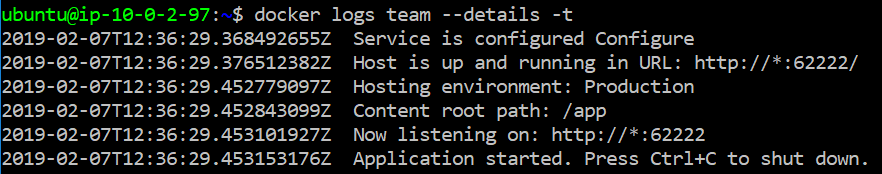

Reviewing the Container’s Output

The very last command for this post is logs. You can view the activities of the container after it was stopped by running docker logs <container-name>. This command displays the container application’s output as it was generated during its lifetime. It can be useful for retrospective analysis. It is also useful to view unhanded exceptions.

Summarizing the Docker Commands (a Short Glossary)

To recap, I grouped the various Docker commands that were mentioned in this post for the reader’s convenience. These fundamental commands are a good start to explore and use the Docker containers’ world.

# Shows all images

docker images

docker images -a# Shows all running containers

docker ps# Shows all containers running history

docker ps --all

docker ps -a# Starting the container

docker start <container-id># Stopping the container (graceful shutdown)

# The container can be resumed with docker start command

docker stop <container-name># Killing running container (abrupt stop)

docker kill <container-name># Deleting a container

docker rm <container-name># Deleting an image

docker rmi <image-id># Creating a new image tag based on existing image

docker tag# Creating a new image based on an existing container

docker commit# Showing the CLI output of the container

docker logs# Managing volumes

docker volumes [commands]

Want to explore more?

My API service is available in GitHub. In addition, you can download my Docker image from DockerHub, under the repository myteams. Run the command docker pull liorksh/myteamsto download it locally. You are encouraged to use them for further exploration and enhancements.

Last Words

This is only the tip of the iceberg, but after you have learned the foundations of Docker you can take it forward easily. Docker has much more to offer. We haven’t touched on managing a container’s resources (CPU, RAM) or how to run a fleet of containers, but now you are in a good position to explore more.

Thanks for reading. Hope you enjoyed this post and find its content useful. As always, your comments are most welcome.

Continue building and creating!

— Lior